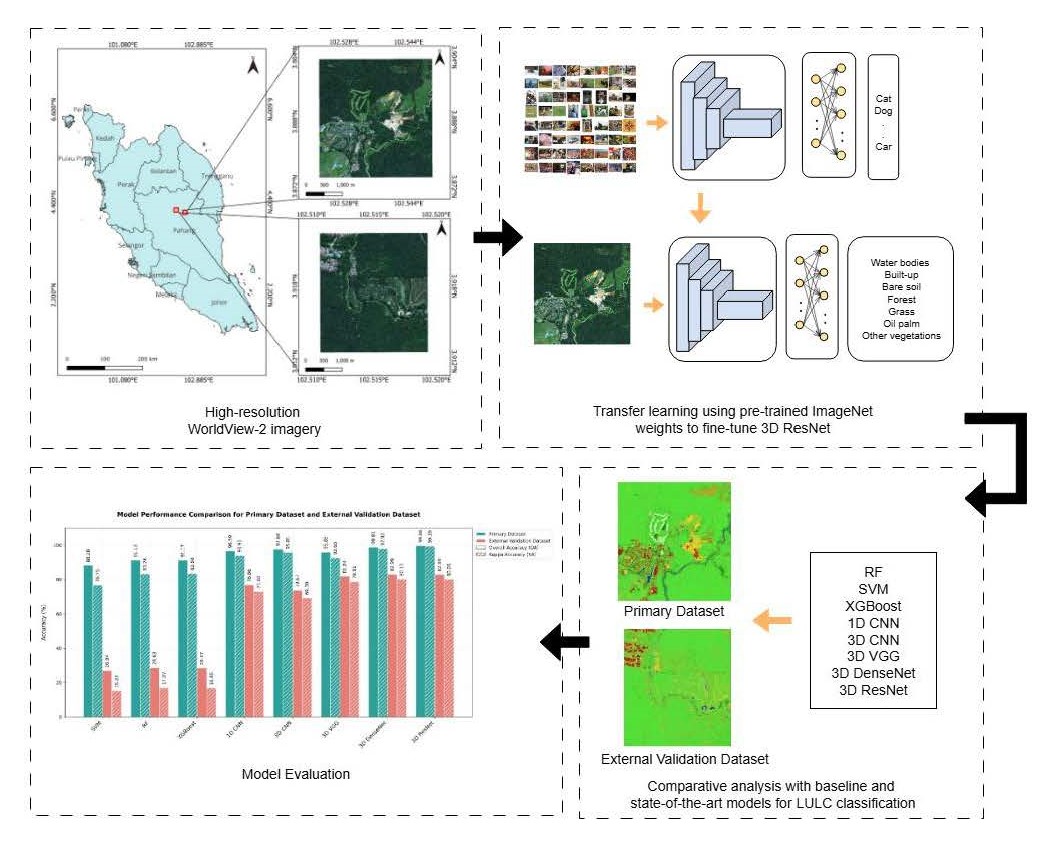

Enhancing Multispectral Land Use and Land Cover Classification with Transfer Learning and 3D ResNet

DOI:

https://doi.org/10.22452/mjs.vol44no4.3Keywords:

convolutional neural network, deep learning, land cover classification, multispectral, transfer learningAbstract

Recent advances in land use and land cover (LULC) classification with remote sensing imagery are driven by state-of-the-art models such as Convolutional Neural Networks (CNNs). Advanced CNN architecture like ResNet can enhance overall classification performance by incorporating residual skip connections. The integration of 3D feature extraction and ResNet architecture suggests a potential improvement in classification tasks. This paper explores the potential of the 3D ResNet model for LULC classification, comparing it with baseline approaches (Support Vector Machine, Random Forest, XGBoost, 1D CNN, 3D CNN) and state-of-the-art 3D models (3D VGG, 3D DenseNet) using WorldView-2 satellite imagery. The 3D ResNet-18 model, fine-tuned via transfer learning on multispectral images, demonstrates significant improvements in classification performance over machine learning models. It achieves the highest Overall Accuracy (OA) of 99.66% and Kappa Accuracy (KA) of 99.39% on the primary dataset. Despite having slightly lower performance on the external validation dataset (OA:82.89%, KA:80.05%) than 3D DenseNet, it is highly efficient with processing times of 490.2 minutes and 3.6 minutes for both datasets respectively. McNemar’s test results show 3D ResNet and 3D DenseNet have significant differences in classification performance (p<0.05) against other models consistently for both datasets.

References

Abdi, A. M. (2020). Land cover and land use classification performance of machine learning algorithms in a boreal landscape using Sentinel-2 data. GIScience & Remote Sensing, 57(1), 1–20. https://doi.org/10.1080/15481603.2019.1650447

Cervantes, J., Garcia-Lamont, F., Rodríguez-Mazahua, L., & Lopez, A. (2020). A comprehensive survey on support vector machine classification: Applications, challenges and trends. Neurocomputing, 408, 189–215. https://doi.org/10.1016/j.neucom.2019.10.118

D, E., & Bhavani, N. P. G. (2023). An Effective DNN Based ResNet Approach for Satellite Image Classification. 2023 4th International Conference on Smart Electronics and Communication (ICOSEC), 1055–1062. https://doi.org/10.1109/ICOSEC58147.2023.10276330

Ebrahimi, A., Luo, S., & Chiong, R. (2020). Introducing Transfer Learning to 3D ResNet-18 for Alzheimer’s Disease Detection on MRI Images. 2020 35th International Conference on Image and Vision Computing New Zealand (IVCNZ), 1–6. https://doi.org/10.1109/IVCNZ51579.2020.9290616

Firat, H., Asker, M. E., Bayindir, M. İ., & Hanbay, D. (2023). 3D residual spatial–spectral convolution network for hyperspectral remote sensing image classification. Neural Computing and Applications, 35(6), 4479–4497. https://doi.org/10.1007/s00521-022-07933-8

He, K., Zhang, X., Ren, S., & Sun, J. (2015). Deep Residual Learning for Image Recognition (arXiv:1512.03385). arXiv. https://doi.org/10.48550/arXiv.1512.03385

Hinton, G., Vinyals, O., & Dean, J. (2015). Distilling the Knowledge in a Neural Network (arXiv:1503.02531). arXiv. https://doi.org/10.48550/arXiv.1503.02531

Huang, G., Liu, Z., Maaten, L. van der, & Weinberger, K. Q. (2018). Densely Connected Convolutional Networks (arXiv:1608.06993). arXiv. https://doi.org/10.48550/arXiv.1608.06993

Jombo, S., Adam, E., Byrne, M. J., & Newete, S. W. (2020). Evaluating the capability of Worldview-2 imagery for mapping alien tree species in a heterogeneous urban environment. Cogent Social Sciences, 6(1), 1754146. https://doi.org/10.1080/23311886.2020.1754146

Jozdani, S. E., Johnson, B. A., & Chen, D. (2019). Comparing Deep Neural Networks, Ensemble Classifiers, and Support Vector Machine Algorithms for Object-Based Urban Land Use/Land Cover Classification. Remote Sensing, 11(14), Article 14. https://doi.org/10.3390/rs11141713

Kingma, D. P., & Ba, J. (2017). Adam: A Method for Stochastic Optimization (arXiv:1412.6980). arXiv. https://doi.org/10.48550/arXiv.1412.6980

Micikevicius, P., Narang, S., Alben, J., Diamos, G., Elsen, E., Garcia, D., Ginsburg, B., Houston, M., Kuchaiev, O., Venkatesh, G., & Wu, H. (2018). Mixed Precision Training (arXiv:1710.03740). arXiv. https://doi.org/10.48550/arXiv.1710.03740

Li, X., Xiong, H., Li, X., Wu, X., Zhang, X., Liu, J., Bian, J., & Dou, D. (2022). Interpretable deep learning: Interpretation, interpretability, trustworthiness, and beyond. Knowledge and Information Systems, 64(12), 3197–3234. https://doi.org/10.1007/s10115-022-01756-8

Liu, J., Wang, T., Skidmore, A., Sun, Y., Jia, P., & Zhang, K. (2023). Integrated 1D, 2D, and 3D CNNs Enable Robust and Efficient Land Cover Classification from Hyperspectral Imagery. Remote Sensing, 15(19), Article 19. https://doi.org/10.3390/rs15194797

Noh, S.-H. (2021). Performance Comparison of CNN Models Using Gradient Flow Analysis. Informatics, 8(3), 53. https://doi.org/10.3390/informatics8030053

Shaharum, N. S. N., Shafri, H. Z. M., Ghani, W. A. W. A. K., Samsatli, S., Al-Habshi, M. M. A., & Yusuf, B. (2020). Oil palm mapping over Peninsular Malaysia using Google Earth Engine and machine learning algorithms. Remote Sensing Applications: Society and Environment, 17, 100287. https://doi.org/10.1016/j.rsase.2020.100287

Sheykhmousa, M., Mahdianpari, M., Ghanbari, H., Mohammadimanesh, F., Ghamisi, P., & Homayouni, S. (2020). Support Vector Machine Versus Random Forest for Remote Sensing Image Classification: A Meta-Analysis and Systematic Review. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 13, 6308–6325. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing. https://doi.org/10.1109/JSTARS.2020.3026724

Simonyan, K., & Zisserman, A. (2015). Very Deep Convolutional Networks for Large-Scale Image Recognition (arXiv:1409.1556). arXiv. https://doi.org/10.48550/arXiv.1409.1556

Tong, X.-Y., Xia, G.-S., Lu, Q., Shen, H., Li, S., You, S., & Zhang, L. (2020). Land-cover classification with high-resolution remote sensing images using transferable deep models. Remote Sensing of Environment, 237, 111322. https://doi.org/10.1016/j.rse.2019.111322

Thölke, P., Mantilla-Ramos, Y.-J., Abdelhedi, H., Maschke, C., Dehgan, A., Harel, Y., Kemtur, A., Mekki Berrada, L., Sahraoui, M., Young, T., Bellemare Pépin, A., El Khantour, C., Landry, M., Pascarella, A., Hadid, V., Combrisson, E., O’Byrne, J., & Jerbi, K. (2023). Class imbalance should not throw you off balance: Choosing the right classifiers and performance metrics for brain decoding with imbalanced data. NeuroImage, 277, 120253. https://doi.org/10.1016/j.neuroimage.2023.120253

Vali, A., Comai, S., & Matteucci, M. (2020). Deep Learning for Land Use and Land Cover Classification Based on Hyperspectral and Multispectral Earth Observation Data: A Review. Remote Sensing, 12(15), 2495. https://doi.org/10.3390/rs12152495

Wang, J., Bretz, M., Dewan, M. A. A., & Delavar, M. A. (2022). Machine learning in modelling land-use and land cover-change (LULCC): Current status, challenges and prospects. Science of The Total Environment, 822, 153559. https://doi.org/10.1016/j.scitotenv.2022.153559

Downloads

Published

Issue

Section

License

Copyright (c) 2025 Malaysian Journal of Science

This work is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License.

Transfer of Copyrights

- In the event of publication of the manuscript entitled [INSERT MANUSCRIPT TITLE AND REF NO.] in the Malaysian Journal of Science, I hereby transfer copyrights of the manuscript title, abstract and contents to the Malaysian Journal of Science and the Faculty of Science, University of Malaya (as the publisher) for the full legal term of copyright and any renewals thereof throughout the world in any format, and any media for communication.

Conditions of Publication

- I hereby state that this manuscript to be published is an original work, unpublished in any form prior and I have obtained the necessary permission for the reproduction (or am the owner) of any images, illustrations, tables, charts, figures, maps, photographs and other visual materials of whom the copyrights is owned by a third party.

- This manuscript contains no statements that are contradictory to the relevant local and international laws or that infringes on the rights of others.

- I agree to indemnify the Malaysian Journal of Science and the Faculty of Science, University of Malaya (as the publisher) in the event of any claims that arise in regards to the above conditions and assume full liability on the published manuscript.

Reviewer’s Responsibilities

- Reviewers must treat the manuscripts received for reviewing process as confidential. It must not be shown or discussed with others without the authorization from the editor of MJS.

- Reviewers assigned must not have conflicts of interest with respect to the original work, the authors of the article or the research funding.

- Reviewers should judge or evaluate the manuscripts objective as possible. The feedback from the reviewers should be express clearly with supporting arguments.

- If the assigned reviewer considers themselves not able to complete the review of the manuscript, they must communicate with the editor, so that the manuscript could be sent to another suitable reviewer.

Copyright: Rights of the Author(s)

- Effective 2007, it will become the policy of the Malaysian Journal of Science (published by the Faculty of Science, University of Malaya) to obtain copyrights of all manuscripts published. This is to facilitate:

- Protection against copyright infringement of the manuscript through copyright breaches or piracy.

- Timely handling of reproduction requests from authorized third parties that are addressed directly to the Faculty of Science, University of Malaya.

- As the author, you may publish the fore-mentioned manuscript, whole or any part thereof, provided acknowledgement regarding copyright notice and reference to first publication in the Malaysian Journal of Science and Faculty of Science, University of Malaya (as the publishers) are given. You may produce copies of your manuscript, whole or any part thereof, for teaching purposes or to be provided, on individual basis, to fellow researchers.

- You may include the fore-mentioned manuscript, whole or any part thereof, electronically on a secure network at your affiliated institution, provided acknowledgement regarding copyright notice and reference to first publication in the Malaysian Journal of Science and Faculty of Science, University of Malaya (as the publishers) are given.

- You may include the fore-mentioned manuscript, whole or any part thereof, on the World Wide Web, provided acknowledgement regarding copyright notice and reference to first publication in the Malaysian Journal of Science and Faculty of Science, University of Malaya (as the publishers) are given.

- In the event that your manuscript, whole or any part thereof, has been requested to be reproduced, for any purpose or in any form approved by the Malaysian Journal of Science and Faculty of Science, University of Malaya (as the publishers), you will be informed. It is requested that any changes to your contact details (especially e-mail addresses) are made known.

Copyright: Role and responsibility of the Author(s)

- In the event of the manuscript to be published in the Malaysian Journal of Science contains materials copyrighted to others prior, it is the responsibility of current author(s) to obtain written permission from the copyright owner or owners.

- This written permission should be submitted with the proof-copy of the manuscript to be published in the Malaysian Journal of Science

Licensing Policy

Malaysian Journal of Science is an open-access journal that follows the Creative Commons Attribution-Non-commercial 4.0 International License (CC BY-NC 4.0)

CC BY – NC 4.0: Under this licence, the reusers to distribute, remix, alter, and build upon the content in any media or format for non-commercial purposes only, as long as proper acknowledgement is given to the authors of the original work. Please take the time to read the whole licence agreement (https://creativecommons.org/licenses/by-nc/4.0/legalcode ).